Know What Your

AI Agents Actually Do

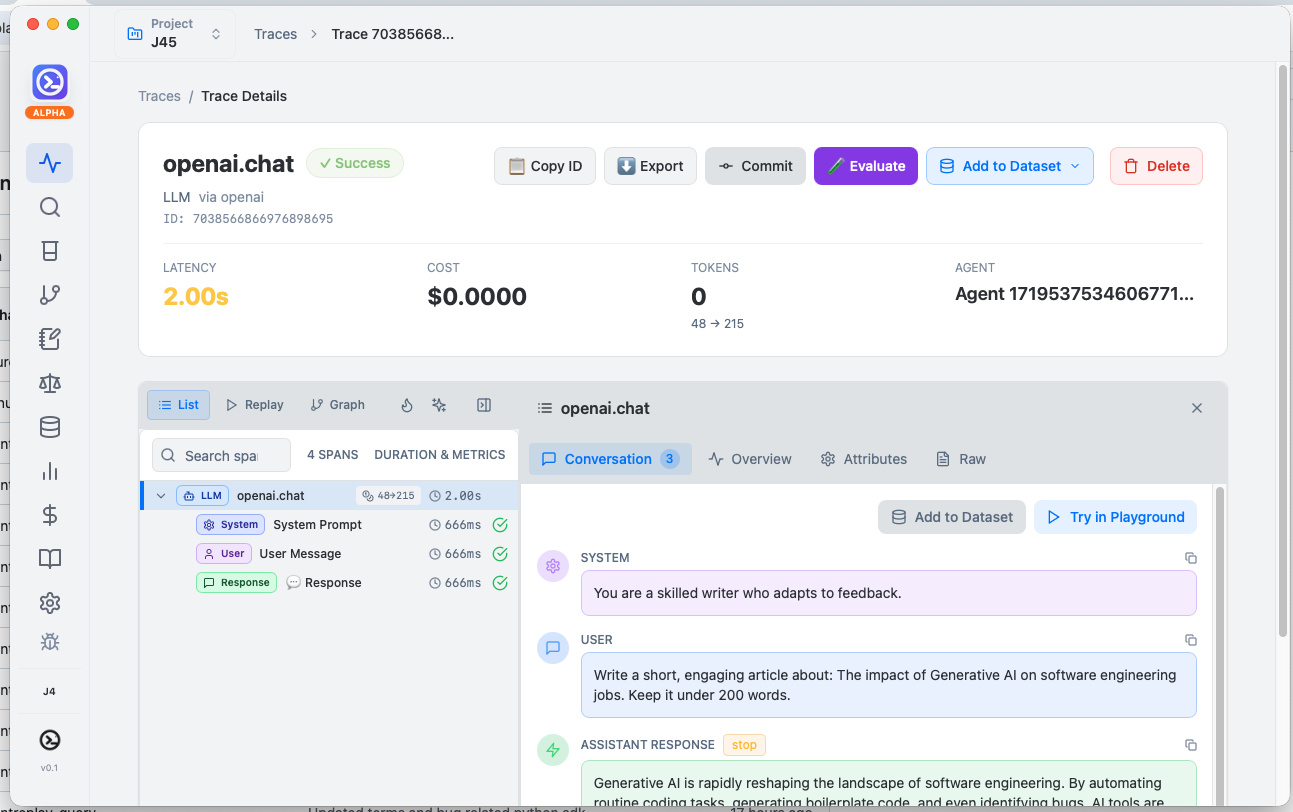

Your AI agents make thousands of decisions you never see. AgentReplay captures every call, every token, every cost — so you can debug failures, catch hallucinations, and ship with confidence. All without sending a single byte to the cloud.

AI Agents Fail in Ways You Can't Predict

You wouldn't deploy a web app without logs. Why would you deploy an AI agent without observability?

Agents Hallucinate Silently

Your agent returns confident answers that are completely wrong. Without tracing, you'll never know which retrieval step failed or which prompt caused the drift.

Costs Spiral Without Warning

A single runaway agent loop can burn through your API budget in minutes. AgentReplay tracks every token and dollar in real-time — before the invoice shocks you.

Cloud Platforms See Everything

Sending your prompts, user data, and business logic to cloud observability platforms creates compliance risks. AgentReplay runs entirely on your machine. Nothing leaves.

Full Observability for Claude Code & AI Coding Tools

See exactly what Claude Code, Cursor, and other AI coding tools are doing behind the scenes. Every tool call, every file edit, every conversation — traced, stored, and searchable with persistent memory across sessions.

┌─ Session: refactor-auth-module │ Duration: 14m 32s │ Cost: $0.47 │ ├─ 🔍 Read src/auth/handler.rs │ └─ 2,847 tokens analyzed │ ├─ 🧠 Reasoning: "The OAuth flow has │ │ a race condition in token refresh" │ └─ Confidence: 0.94 │ ├─ ✏️ Edit src/auth/handler.rs │ └─ +47 lines, -12 lines │ ├─ 🔧 bash: cargo test auth │ └─ 23 tests passed ✓ │ ├─ 📝 Memory saved: │ └─ "auth module uses PKCE flow, │ tokens stored in secure keychain" │ └─ ✅ Session complete Total: 8 tool calls │ 12,451 tokens

Everything You Need. Nothing You Don't.

A complete observability platform that runs on your machine. No accounts, no API keys, no cloud dependencies.

Trace Every LLM Call

OpenTelemetry-native ingestion captures every request, response, and token usage. Auto-instrument OpenAI, Anthropic, LangChain, and LlamaIndex with a single line.

20+ Built-in Evaluators

Hallucination detection, RAGAS faithfulness, G-Eval quality scoring, toxicity checks, tool correctness, and adversarial robustness testing — out of the box.

MCP Protocol Server

Your traces and memory become context for AI tools. Claude, Cursor, and any MCP client can query your agent history for smarter, context-aware responses.

Prompt Versioning & A/B Tests

Semantic versioning, deployment environments, canary rollouts, and traffic splitting. Know which prompt version performs best in production.

Persistent Agent Memory

Observations, sessions, and context that survive restarts. Your agent never forgets what it learned — and neither do you.

Real-Time Cost Tracking

Per-model, per-session, per-project cost breakdowns. Set budget alerts before costs spiral. Know exactly what each agent workflow costs.

Semantic Vector Search

HNSW index with local ONNX embeddings and 32× compression. Search your traces by meaning, not just keywords. Find the needle in a million spans.

Privacy by Architecture

Not just a policy — it's the architecture. No network calls, no cloud sync, no telemetry. PII scrubbing, sensitivity flags, and compliance reports built in.

Beautiful Desktop App

Native macOS, Windows, and Linux app. Real-time trace visualization, model comparison, prompt playground — all in a polished UI that feels like home.

Built with Rust. Modular by Design.

16 focused crates — each with a single responsibility. Powered by SochDB for ACID storage and HNSW for sub-millisecond vector search.

Why Not Just Use a Cloud Platform?

Cloud platforms are powerful — but they see your data. AgentReplay gives you the same capabilities with zero compromise on privacy.

| Capability | AgentReplay | Langfuse | Arize | Braintrust |

|---|---|---|---|---|

| 100% Local / Offline | ✓ | ✗ | ✗ | ✗ |

| Claude Code Observability | ✓ | ✗ | ✗ | ✗ |

| Persistent Agent Memory | ✓ | ✗ | ✗ | ✗ |

| Built-in MCP Server | ✓ | Partial | ✗ | ✗ |

| 20+ Evaluators | ✓ | ✓ | ✓ | ✓ |

| Prompt Versioning | ✓ | ✓ | ✓ | ✓ |

| Native Desktop App | ✓ | ✗ | ✗ | ✗ |

| No Account Required | ✓ | ✗ | ✗ | ✗ |

| Data Stays on Device | ✓ | Self-host | ✗ | ✗ |

| Free Forever | ✓ | Freemium | Freemium | Freemium |

Instrument in 3 Lines. Debug in Seconds.

Add full observability to any AI agent without changing your business logic. Works with every major LLM provider.

import agentreplay

from openai import OpenAI

# One line — traces flow to your local AgentReplay server

agentreplay.init()

# Wrap your client. That's it. Every call is now traced.

client = agentreplay.wrap_openai(OpenAI())

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "Summarize this PR"}]

)

# Tokens, latency, cost — all captured automatically.

# Open AgentReplay desktop app to see traces in real-time.Stop Flying Blind with Your AI Agents

Download AgentReplay, add three lines of code, and see everything your agents do — in real-time, on your machine, for free.